As I said in No, you don’t have to learn LangChain, we shouldn’t get distracted by the artificial complexity introduced by our frameworks. LangChain is mostly a wrapper around the REST APIs of various LLM providers. Useful? Yes—switching between models becomes easy.

But here’s a mystery I can’t explain.

When I added Gemini as a fallback to DeepSeek (see yesterday’s post about DeepSeek refusing to touch Chinese politics), I thought it would be straightforward:

By default, I’m instantiating my chat model thus:

llm = init_chat_model(model=\'deepseek-chat\', model_provider=\'deepseek\')

And, if a subsequent call would error out with DeepSeeks HTTP/400 “Content Exists Risk”, I’d go with Gemini instead:

llm = init_chat_model(model=\'gemini-2.5-flash\', model_provider=\'google_vertexai\')

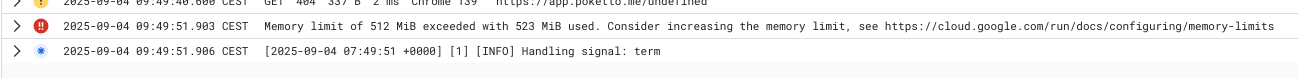

So far, so good—until I deployed it to CloudRun. There, Every time the fallback kicked in, the instance crashed:

Memory limit of 512 MiB exceeded with 523 MiB used. Consider increasing the memory limit…

I dug deeper on my local machine: calling init_chat_model with google_vertexai instantly doubled the memory consumption of my Python process!

I didn’t want to debug this forever, so I swapped Gemini out for Claude:

llm = init_chat_model(\"claude-3-5-sonnet-latest\", model_provider=\"anthropic\")

Result: No memory issues at all.

So… riddle me this: why in the world would LangChain need 200+ MB of memory just to launch a Vertex AI chat model that ultimately just sends a REST call to Google?