Recently, I wrote about adopting Posthog for poketto.me. At first, I thought I’d use it for the basics:

📆 Daily & weekly active users (DAU/WAU)

📎 Core events (URLs saved, links shared, etc.)

🚨 Error tracking and alerting

But then I realized: analytics can do much more. In fact, Posthog replaced one of my home-grown tools — my “podcast heuristic accuracy guestimator.” Let me explain.

When a user adds content to their podcast feed, poketto.me has to gauge three things:

a) How long will it take to generate the episode? (So that I can give nice, visual feedback as discussed in TIL #68)

b) How long will the episode actually play?

c) Will the episode be truncated by the user’s quota (see TIL #81)?

My early heuristics looked like this:

⏱ ~0.33 seconds to generate each spoken word

⏱ ~0.5 seconds of audio per word

📄 Script length ≈ 90% of original text length

How did I measure that? With the most primitive tool of all: printf logging. Every test run, I scraped CloudRun logs, pasted numbers into a spreadsheet, recalculated factors, and updated my code. It worked — but was clunky and error-prone.

Enter Posthog 🦔

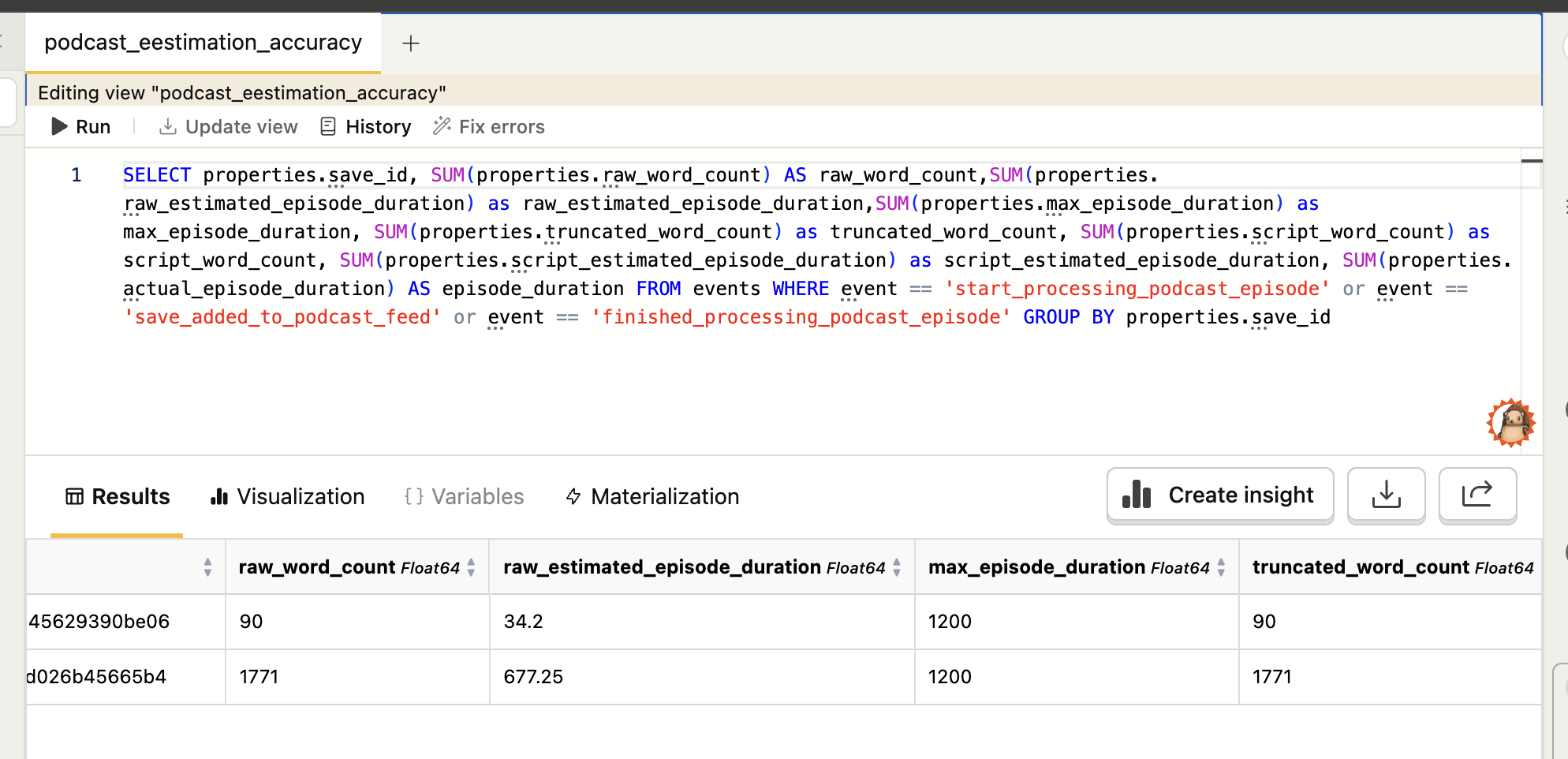

Now I track three events for each podcast episode:

🔵save_added_to_podcast_feed → captures raw word count + estimated duration

🔵start_processing_podcast_episode → captures the TTS-optimized script length

🔵finished_processing_podcast_episode → captures the actual duration

With these in Posthog’s data warehouse, I can easily query ratios, refine my heuristics, and spot divergences across languages or voice models. No manual log-scraping, no spreadsheet acrobatics.

Turns out: product analytics isn’t just about counting active users. Sometimes it’s the perfect place to offload parts of your code itself to a more specialized tool.