Running text-to-speech in the cloud is fun—until it isn’t.

Early on, I didn’t think much about thread safety. During my own testing, rarely would more than one TTS task be running in parallel, so there were no big issues. But once more users started using the feature, strange bugs popped up:

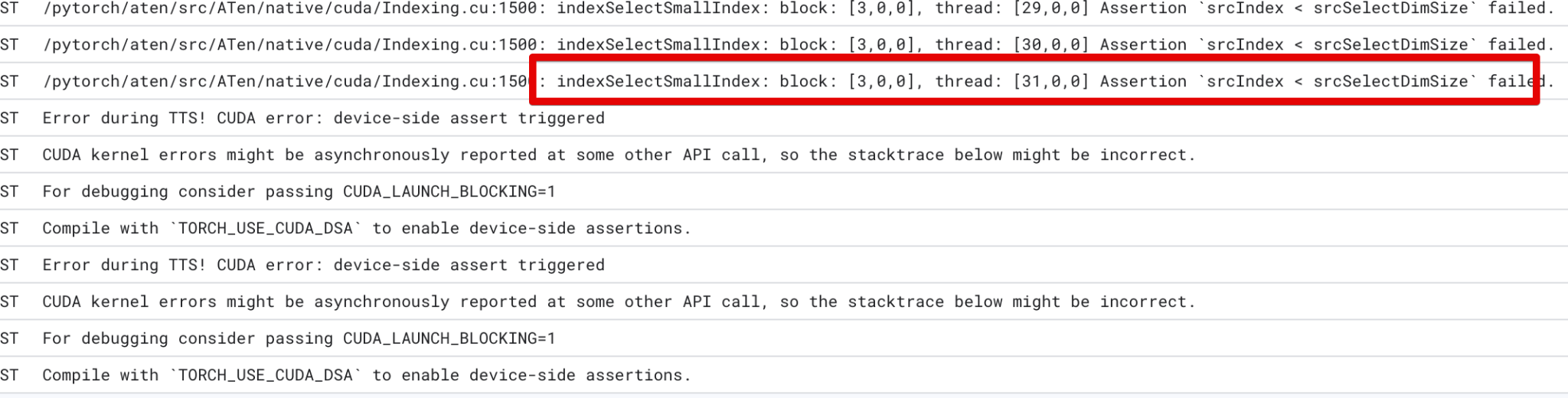

Errors like “Assertion srcIndex < srcSelectDimSize failed” started showing up in the logs—and worse, once triggered, the entire Cloud Run instance would become unusable until a redeploy.

Digging in, I realized the culprit: When multiple TTS requests hit the same instance concurrently, all would fail. Why?

Because the parts of my TTS model that run on the CUDA GPU are not thread-safe. Only one can run at a time.

Here’s my quick fix: I reconfigured Gunicorn to run with just one worker process and one thread, but with no timeout, so requests queue instead of running in parallel.

CMD ["gunicorn", "-w", "1", "--threads", "1", "--timeout", "0", "--keep-alive", "30", "--bind=0.0.0.0:8080", "main:app"]

Result: No more race conditions. Just good ol’ first-come, first-served TTS.